Why Data Scientists Should Adopt Machine Learning (ML) Pipelines

MLOps in Practice — as a data scientist, are you handing over a notebook or an ML pipeline to your ML engineers or DevOps engineers for the ML model to be deployed in a production environment?

👋 Hi folks, thanks for reading my newsletter! My name is Yunna Wei, and I write about modern data stack, MLOps implementation patterns and data engineering best practices. The purpose of this newsletter is to help organizations select and build an efficient Data+AI stack with real-world experiences and insights and assist individuals to build their data and AI career journey with deep-dive tutorials and guidance; Please consider subscribing if you haven’t already, reach out on LinkedIn if you ever want to connect!

Background

In my previous articles :

Learn the Core of MLOps — Building Machine Learning (ML) Pipelines

MLOps in Practice — De-constructing an ML Solution Architecture into 10 components

I talked about the importance of building ML pipelines. In today’s article, I will deep dive into the topic of ML pipelines and explain in detail:

Why is it necessary and important to build ML pipelines

What are the key components of a ML pipeline

Why and how data scientists should adopt ML pipelines?

Why is it necessary and important to build ML pipelines?

It is quite common that data scientists start developing ML models in a notebook environment. Within a notebook, they experiment with different datasets, different feature engineering techniques, as well as different combinations of parameters and hyperparameters, in order to find the best-performing ML models. After what can be multiple rounds of performance tuning and feature engineering, they find the ideal ML model. They continue to validate this model’s performance with test data to make sure the model is not “overfitted” and that it meets the business requirements. It is very likely that the model validation codes are developed and run in the same notebook environment. When they are happy with the overall model performance, they will handover a notebook (with codes potentially covering data ingestion, data exploration, feature engineering, model training and logging and model validation) to an ML engineer or DevOps engineer, for the model to be deployed in a production environment.

However, there are many problems that could arise from this handover process:

Slow and manual iterations — When an issue is detected in the deployed ML system, data scientists need to go back to their notebooks to find a solution and handover the solution to the production engineers again for deploying the change to the production environment. The same process repeats every time when there is a need to update the model. There could be endless handovers. This is a very slow iteration. What’s worse, this manual and slow iteration could potentially cause significant business loss.

Duplicated effort — It is very likely that codes produced by data scientists in a notebook environment are not production-ready. Hence, ML engineers need to put in the effort to rewrite and test the codes and convert the codes into modules for production deployment. An ML pipeline can minimize the code-rewriting effort significantly. I will explain why in the later part of this article.

Error prone — ML applications are quite dependency-reliant, meaning data scientists need to import various external libraries to assist the model training process. For example, they generally leverage Pandas for data cleaning and feature engineering, ML/DL frameworks for feature and model development, Numpy for tensor and array computation. Other than these popular libraries, they are also likely to use some niche libraries for their particular environment. Because of this dependency-reliant nature, there could be errors raised when the library used in the production environment mismatch with the original ones used in the development environment. In the previous point, we mentioned that there is a lot of code rewriting when the notebook gets handed over to the ML engineers. There could also be a lot of unintentional errors created during code translation, such as misconfigurations of model hyperparameters, unbalanced training and test split, data leak due to misuse of feature engineering logics.

Hard to scale — Because a lot of time and effort is spent on this handover process, it is very difficult for the team of data scientists and ML engineers to go large-scale. If organizations want to develop more ML applications, they need to recruit more data scientists and engineers. Therefore getting rid of this manual handover between data scientists and ML engineers can significantly improve team efficiency and more ML applications can be built, with limited resources.

Now let’s see how ML pipelines can streamline or even remove this manual handover process and bridge the gap between the notebook ML model experimentations in a development environment and ML model deployment in a production environment. The ultimate goal is that ML applications get deployed and updated in a fast, automated and safe manner.

First Let’s understand what an ML pipeline is.

What is a ML pipeline and the key components of a ML pipeline

Before we deep dive into what a ML pipeline is, let’s first understand what a “pipeline” is. Below is a definition from Wikipedia:

In computing, a pipeline, also known as a data pipeline, is a set of data processing elements connected in series, where the output of one element is the input of the next one. The elements of a pipeline are often executed in parallel or in time-sliced fashion. Some amount of buffer storage is often inserted between elements.

There are a few key components in the above definition and they apply to a ML pipeline perfectly as well. Let’s see how it works:

A set of data processing elements — The first part of the definition talks about a set of data processing elements. This is very straight forward. If we apply this to an ML pipeline, the set of data processing elements could include data ingestion, data pre-processing, feature engineering, model training, model prediction and so on. For your ML pipelines, you could customize each component and add as many components in a pipeline as you need. Each component performs one task and all tasks combined deliver a pipeline. Dividing a workflow into multiple elements is very useful for ML applications. The reason is, each element in a ML pipeline could potentially require very different compute resources. Allocating appropriate compute resources for each element, instead of using a giant compute for the whole workflow provides a good opportunity for improving compute efficiency.

Connected — The second element is that these components are connected. They do not run completely independently from each other. More importantly, the dependencies of the above mentioned data processing elements are captured. In the context of an ML pipeline, the data ingestion step must be finished before running data pre-processing, or the model training step must be complete before model prediction.

Input / Output — Each data processing element in a pipeline has an input and output. Often, the output artifacts of one element are used as input artifacts to a subsequent step. These artifacts will be stored in a central location, such as an artifact repository.

In parallel / In time-sliced fashion — Defines the execution order of each element in an ML pipeline. “In parallel” means two elements can run at the same time; while “in time-sliced fashion”, means there is dependency between two elements. One element has to wait until the other one finishes.

Buffer storage — Buffer storage is the artifacts repository that we mentioned in the Input / Output section. The buffer storage provides a central location for data artifacts so that downstream elements can consume the outputs of upstream elements, logs and metrics for monitoring and tracking purposes. With the artifacts repository in place, you can re-use the outputs of some elements instead of running every element when you need to re-run the workflow. This also can improve pipeline efficiency and reduce compute costs. Additionally You can also set up a database for your ML metadata including Experiments, jobs, pipeline runs, and metrics.

Below is a sample of what an ML training pipeline could look like:

This sample ML training pipeline includes 10 tasks. Generally an ML pipeline is defined using either a Python SDK or a YAML file. For each task, you can define the following configurations:

Name — the name of the task

Description — a description to the task

Input — the input settings of the task

Output — the output settings of the task

Compute — the underlying compute resources used to execute the task

Parameters — additional parameters supplied to run the task

Requirements — environment dependencies required to be installed in the compute resources

Task dependencies — the connected upstream and downstream tasks.

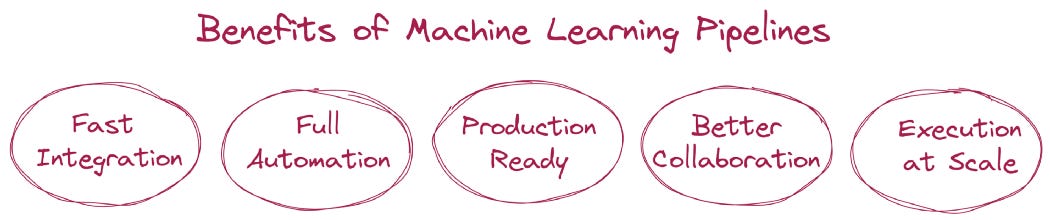

The benefits of adopting ML pipelines

Compared to having all the codes in one notebook, modularizing the codes into ML pipelines provides the following characteristics:

Self-contained — Each task in an ML pipeline is a self-contained processing unit. You can define the necessary configurations and dependencies and select the most appropriate compute resources for each task.

Re-use — Because each task is self-contained and the task outputs and related metadata are stored in a central artifact location. Therefore you have the flexibility to decide what tasks may be skipped when triggering a pipeline re-run. For example, if you just want to re-run the model training task, you can reuse the outputs of the previous tasks such as data processing and feature engineering.

Modularized codes — In a pipeline, each task is modularized with configurations and dependencies, which is aligned with the general DevOps standards. This can reduce the code-rewriting effort required from ML engineers and minimize the potential errors brought by this code-rewriting process.

Therefore building an ML pipeline brings the following benefits:

Fast iteration — Once an ML solution is deployed into the production environment, data scientists can update the pipeline whenever necessary and their code changes will be merged and deployed following the defined DevOps standards. This can significantly reduce the time required to update an ML model and reduce the iteration cycle

Full automation — Once an ML solution is deployed into the production environment, all the code changes will follow defined DevOps standards and get deployed automatically. Therefore, no manual handover will be required between data scientists and ML engineers.

Production ready — Because all the codes are modularized in a pipeline, the codes handed over by data scientists are pretty much production ready and the re-writing efforts could be very minimal.

Better collaboration — The way ML pipelines are constructed avoids a lot of unnecessary communication between data scientists and ML engineers, as the pipeline definitions and task configurations contain pretty much all the necessary information for ML engineers to deploy the pipelines. If all the codes are in one big notebook, ML engineers definitely will need more communication with data scientists to seek clarity.

Execution at scale — The fast iteration and full automation characteristics of an ML pipeline minimize the manual effort required to operate ML solutions in a production environment. Hence, the same number of data scientists and ML engineers are able to handle a much larger number of ML applications. This allows organizations to scale the benefits of ML and AI with limited resources.

To summarize, I would love to reiterate:

The output of a notebook is a model; while the output of a pipeline is a piece of software. At the production stage, it is the ML system that gets deployed, not the ML model. Therefore, ML pipelines are a great mechanism to bridge the gap between the ML development environment (which is notebook-driven) and the ML production environment (which is system-driven).

Open source frameworks for implementing ML pipelines

If you are now more motivated to generate ML pipelines, instead of producing a notebook, below is a list of open source frameworks that you can leverage:

ZenML — the open source MLOps framework for unifying your ML stack

MLflow Recipes — a module of MLflow that enables data scientists to quickly develop high-quality models and deploy them to production

Metaflow — a framework for real-life data science and ML

Kubeflow Pipelines — Kubeflow Pipelines (KFP) is a platform for building and deploying portable and scalable machine learning (ML) workflows by using Docker containers

MLRun — The open source MLOps orchestration framework

If you would like to see me write hands-on tutorials on building ML pipelines, please subscribe and leave a comment to let me know which specific framework you are most interested in.

If you liked the article, Stay tuned, subscribe, and share. I’d very much appreciate the support!