ML model registry — the “interface” that binds model experiments and model deployment

MLOps in Practice — A deep- dive into ML model registries, model versioning and model lifecycle management Background

👋 Hi folks, thanks for reading my newsletter! My name is Yunna Wei, and I write about modern data stack, MLOps implementation patterns and data engineering best practices. The purpose of this newsletter is to help organizations select and build an efficient Data+AI stack with real-world experiences and insights and assist individuals to build their data and AI career journey with deep-dive tutorials and guidance; Please consider subscribing if you haven’t already, reach out on LinkedIn if you ever want to connect!

Background

In my previous article:

MLOps in Practice — De-constructing an ML Solution Architecture into 10 components

I talked about the architectural importance of managing model metadata and artifacts generated by ML experiment runs. We all know that the model training process produces many artifacts for further ML model performance tuning, as well as for subsequent ML model deployment. These artifacts include the trained models themselves as well as model parameters and hyperparameters, metrics, codes, notebooks, configurations and so on. Central management and leveraging these model artifacts and metadata is critical for a robust MLOps architecture. Therefore, in today’s article, I will discuss the ML model registry store, which performs as the “interface” that binds model experiments and model deployment.

Specifically, today’s article will focus on the following aspects:

The question of …… what is an ML registry store, as well as the key functions performed by a ML registry store

The key benefits brought by an ML model registry store

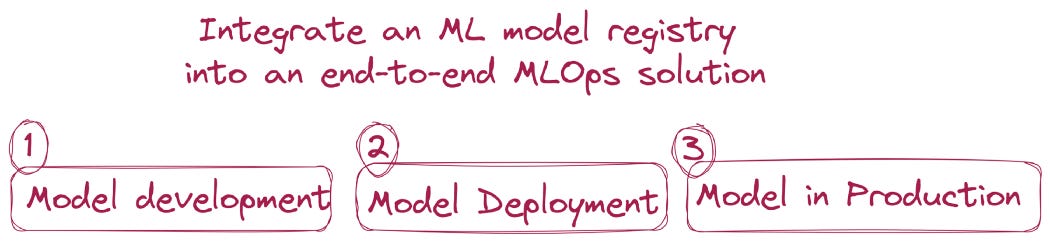

How to integrate an ML model registry into an end-to-end MLOps solution

The technologies behind a ML registry store and popular open source solutions for a ML registry store

What is an ML registry ?

An ML registry is a centralized place to store all your ML artifacts along with their metadata from early-stage experiments to production-ready models. Similar to container registries like DockerHub or Python package registries like PyPi, an ML registry allows data scientists and ML practitioners to publish and share ML models and artifacts. Generally, an ML registry provides a User Interface (UI), as well as set of APIs for ML admins and users to register, discover, share, version, and manage permissions and lifecycle of ML models.

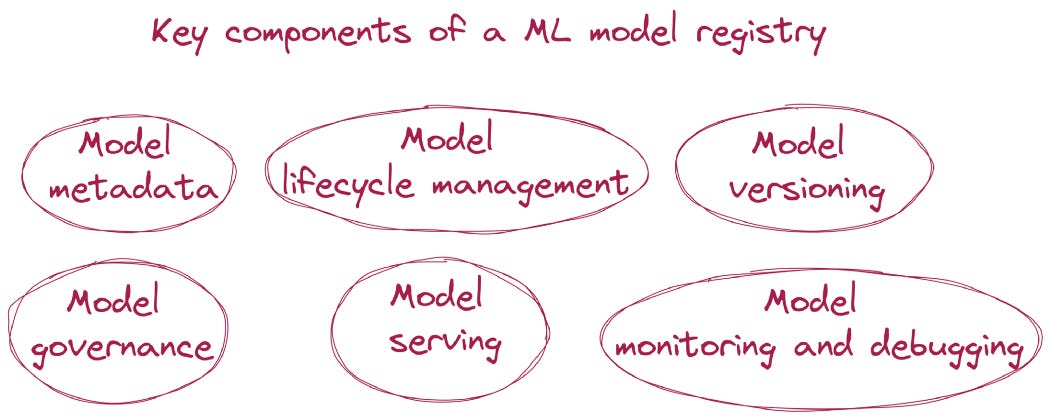

The information kept within an ML registry store, can be summarized into the following categories:

Model metadata including model name, model annotations and descriptions, model tags, model creation time, model modification time and model schema (model input schema and output schema);

Model lifecycle management encompasses all stages of a model’s life, from its creation to its retirement. For example, MLflow provides predefined stages for common use-cases such as Staging, Production and Archived.

Model versioning. It is almost 100% certain that there will be multiple versions for any registered ML model. The reason is that ML models need to be constantly monitored and updated to reflect how the business context and data changes. Model versioning is more than just providing a version number, but a mechanism to align each version of the ML model with the corresponding data, features and codes used to train it for end-to-end model lineage.

Model governance — including managing model permissions (controlling who can manage and update models), auditing model activities and usage trails, reviewing and approving models before deploying into production, notifications for critical model changes and model lineage.

Model serving. Model registry can facilitate model serving by providing webhooks that enable ML engineers to listen for model registry events. When a particular event happens, corresponding actions can be automatically triggered. You can use webhooks to automate and integrate your ML pipelines with existing CI/CD tools and workflows. For example, you can trigger CI builds when a new model version is created, or notify your team members through Slack each time a model transition to production is requested.

Model monitoring and debugging. After an ML model is deployed into production, monitoring how the model performs is necessary. An ML model registry provides the mechanism for data scientists to test against the deployed models with production data to closely monitor how the model performs. If any model degradation is identified, data scientists can leverage the model linage information to identify root causes.

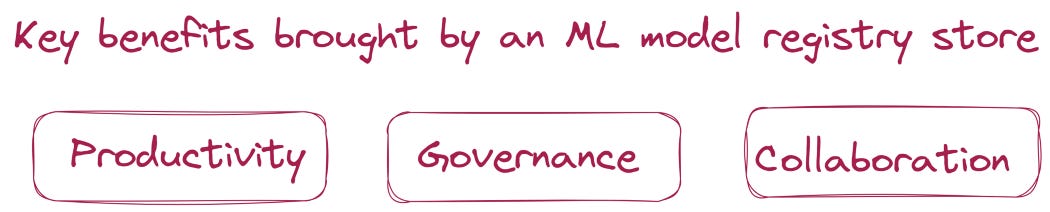

Key benefits brought by an ML model registry store

Productivity — A central ML registry significantly removes the silos where each data science / ML team manages their own ML models and artifacts. An ML registry can perform like an ML model marketplace where teams can publish, share and reuse the work of other teams. Overall, this can significantly improve the team productivity and have more ML applications developed with less resources.

Governance — Responsible AI and ML governance have been critical topics for many organizations from regulatory, ethical, social, and legal perspectives. A central ML registry can assist the AI/ML governance effort, by providing information such as model permissions, model usage trails, model auditing reports, and model linage to raw and features.

Collaboration —An ML model registry is the single and unified interface shared by both data scientists and ML engineers, which can facilitate and streamline the handoff between data scientists and ML engineers. When data scientists are happy with the overall model performance after rounds of experiments, they will hand over the code and model to ML engineers for production deployment. Having the ML model registry in place, ML engineers have good visibility of understanding how the model is trained, what data and features are used, and what the feature engineering logics are. This significantly reduces the communication effort between data scientists and ML engineers and improves the collaboration among teams.

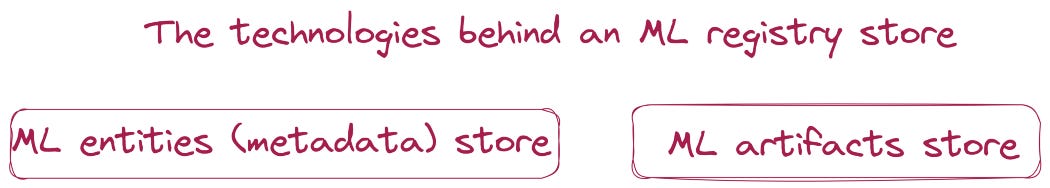

The technologies behind an ML registry store

Under the hood, model registries generally comprise the following two elements:

One is an ML entities (metadata) store — The entities store, stores the metadata of ML entities, such as ML experiments, runs, parameters, metrics, tags, notes, sources, lifecycle stages, as well as ML artifact locations. An ML entities store is normally implemented by a SQL relational database, such as PostgreSQL, MySQL, MSSQL and SQLite.

The other is an ML artifacts store — The artifacts store persists artifacts files, models, images, in-memory objects, model summary or any objects that are logged to the ML registry store. The artifact store is a location suitable for large data and is where clients log their artifact output (for example, models). The artifact store implementation is generally backed up by a persistent file system, such as Amazon S3 and S3-compatible storage, Azure Blob Storage, Google Cloud Storage, FTP server, SFTP Server, NFS and HDFS.

Integrate an ML model registry into an end-to-end MLOps solution

An ML model registry plays a very important role for the 3 critical stages of an end-to-end MLOps solution:

The first is model development — Model development is very iterative and experimental, meaning data scientists need to try various algorithms, frameworks, and different combinations of features, parameters and hyperparameters for these algorithms, in order to find out what works best for the problem. Therefore being able to reproduce ML experiments runs can significantly improve data scientist’s productivity and help them to more quickly find the most ideal solution. An ML model registry provides lineage capabilities that allow data scientists to trace back from a registered ML model to the training runs that produce the model, so that they can either reproduce the model or make necessary changes to retrain a newer version of the ML model.

The second is model deployment — Model registry events (such as a new model version being created for the associated model or a model version’s stage being changed from staging to production) can be leveraged to automatically trigger ML model deployment. For example, you can trigger CI builds when a new model version is created, or notify your team members through Slack each time a model transition to production is requested. You can also integrate the model registry events to automatically trigger existing CI/CD pipleines and workflows.

The third is model in production — Model registry provides a holistic view of all the models in production and allows the ML operation teams to monitor these models accordingly. ML models are extremely data reliant. Therefore, ML models can have deteriorated performance not only due to suboptimal coding, but also due to constantly evolving data landscapes. Once model performance deterioration is identified, an ML registry service can help ML operations team debug and retrain the model by providing necessary model artifacts and model lineage capability.

Therefore, MLOps in it’s entirety, cannot be done fully and correctly until you have a state-of-the-art Model Registry.

The popular solutions for a ML registry store

MLflow Model Registry — MLflow is an open source platform to manage the ML lifecycle, including experimentation, reproducibility, deployment, and a central model registry. The fourth major component of MLflow is the model registry. With MLflow, you can build a registry store in your local file system of the machine where MLflow is running, or you can spin up a remote central tracking server where teams can centrally register and share ML model artifacts. If you are already on Databricks, you would have access to a hosted tracking server available to you.

VertaAI ModelDB — An open-source system for Machine Learning model versioning, metadata, and experiment management. The Verta library comes with a model catalog component where users can find, publish, and use ML models or ML model pipeline components.

Amazon SageMaker Model Registry — As is well-known, SageMaker is AWS’s ML manager, that provides various components for users to build, train and deploy ML models. SageMaker also has a model registry service that users can catalog versions of an ML model into predefined model package groups. If you have built your ML platform on top of SageMaker, SageMaker’s native model registry could be a good option.

Summary

The ML model registry is a central component of MLOps that assists to minimize the well-known gap between model experiments, activities and model production activities. It’s a fact, I believe, that MLOps cannot be done right until you have a state-of-the-art Model Registry.

Please feel free to let me know if you have any comments and questions on this topic or other MLOps related topics! I generally publish 1 article related to data and AI every week. Please feel free to subscribe so that you can get notified when these articles are published.

Thanks so much for your support!