Data Engineering Best Practice — Embedding Reliability and Integrity into Your Data Pipelines

Building highly reliable and trustworthy data pipelines to deliver high quality data and information for downstream data consumers

👋 Hi folks, thanks for reading my newsletter! My name is Yunna Wei, and I write about modern data stack, MLOps implementation patterns and data engineering best practices. The purpose of this newsletter is to help organizations select and build an efficient Data+AI stack with real-world experiences and insights and assist individuals to build their data and AI career journey with deep-dive tutorials and guidance; Please consider subscribing if you haven’t already, reach out on LinkedIn if you ever want to connect!

The Importance of Data Quality and Data Reliability

It goes without saying, data is absolutely critical to the operations and applications of many organizations nowadays. For data engineers, it is not only about purely delivering data through Extract, Transform and Load (ETL) pipelines, but more about delivering reliable data so that business can make valid and well-informed data-driven decisions, data scientists can develop trustworthy Machine Learning(ML) models and data-intensive applications can run without worrying about corrupted data.

I have to say, managing and maintaining data quality takes consolidated effort from multiple teams, but data engineers definitely have a role to play here. Therefore, the focus of this article is on how, in practice, data engineers can embed data quality controls and validations within data pipelines.

Below is an outline for today’s article:

The key components of data quality management and the scope of each key component;

A medallion design pattern — End-to-end pipeline flow design pattern with data quality controls in place ;

Implementation guidance for embedding data quality checks into the data pipelines. I will deep dive into 3 frameworks — great_expectations, deequ along with pydeequ, and Databrick’s Delta Live Table (DLT) — for each framework, I will explain how each works, and share implementation guidance and programming models for each.

Key Components of Data Quality Management

To understand the data through data profiling and data exploratory analysis, it is necessary for data engineers to really understand what data their pipelines are working with. There are two levels of understanding the data. The first level is to understand the data from a technical and statistical perspective, such as the average size (number of records) of every batch of the data, the minimum, the maximum, the average, uniqueness and completeness. Another level is to understand the data from a business perspective — like understanding how the data is being used, which columns are critical, and any calculations required on the data.

Defining the data quality rules, checks and expectations. Data engineers can develop from the data profiling and analysis results you would get from the preceding dot point. They also need to talk to the business owners and end users of the datasets they are piping with, to get their requirements on data quality.

Implementing checks and validations based on the above defined data quality constraints through data pipelines in an automatic manner, to make sure every batch of newly arrived data goes through the required checks and validations before landing into the data storage locations.

Agreeing with the key stakeholders what actions to take if the data pipelines detect there is any violations of data quality rules. For example, if the dataset that your pipeline is processing is extremely business-critical and there should be no data quality compromise at all, any data quality violation should warrant a pipeline failure and send a severe alarm to the key people, so that these data quality issues can be fixed immediately. However, if the use cases you are supporting can tolerate some data quality issues, instead of failing the pipelines, you can either drop those bad records or put them in different locations and deal with them later.

Reporting and documenting data quality check results to a monitoring solution. Defining and monitoring Key Performance Indicators (KPIs) — like number of passed records and number of failed records, around data quality not only provides better visibility and observability, but more importantly, monitoring data quality gives people the clarity where the common data quality issues are, so that corresponding actions can be taken to fix the root causes of bad data. In the long-term, there should be an upward trend of overall data quality.

End-to-end pipeline flow with data quality controls in place

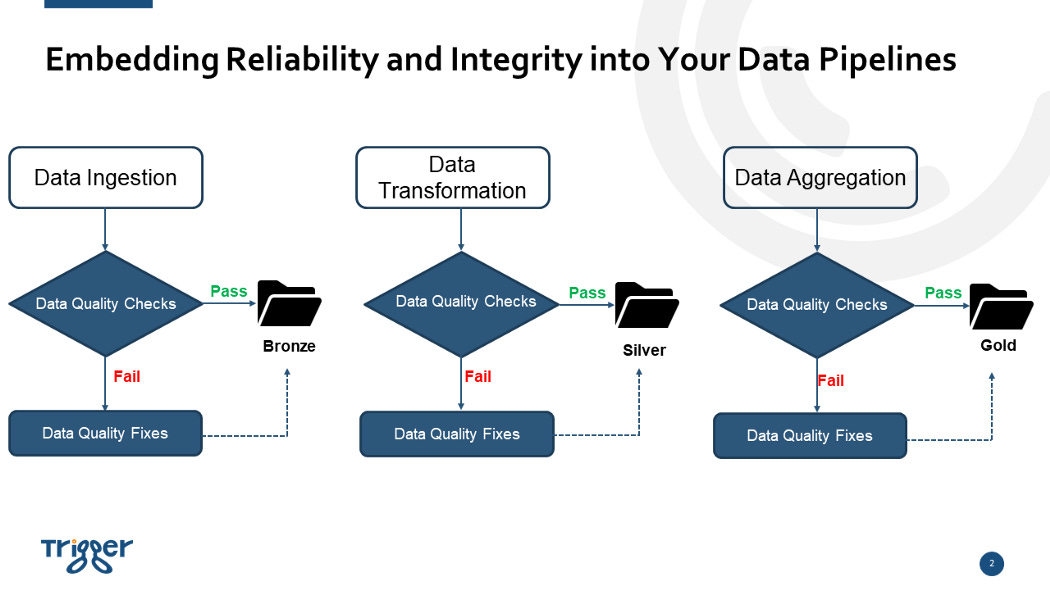

The illustration shown below, displays a typical end-to-end data flow that a data pipeline will go through, including ingesting raw data, data transformation and data aggregation.

The key message is that before persisting the data into the storage layers (Bronze, Silver, Gold), the data must pass data quality checks and for the corrupted data records that fail the data quality checks to be dealt with separately, before they are written into the storage layer. I will demonstrate how to implement the a pipeline respectively using great_expectations, deequ and pydeequ, and Databrick’s Delta Live Table (DLT) in the following part of this article. However before I go to the implementation part, you might be wondering what “Bronze Silver, Gold” mean here.

The “Bronze => Silver => Gold” pattern is a type of data flow design , also called a medallion architecture. A medallion architecture is designed to incrementally and progressively improve the structure and quality of data as it flows through each layer of the architecture. This is why it is relevant for today’s article regarding data quality and reliability.

Below is a summary of what each layer — Bronze, Silver and Goled— is under a medallion architecture:

Bronze (raw data) — the bronze layer, also called raw data layer, is where data engineers ingest and persist all the raw data from external data sources. The table structures in this layer correspond to the source system table structures “as-is,” along with any additional metadata columns that capture the load date/time, process ID, etc.

Silver layer (cleansed and conformed data) — in the silver layer, the data from the Bronze layer is matched, merged, conformed and cleansed so that the Silver layer can provide a global view of all its key business entities, concepts and transactions. (e.g. master customers, stores, non-duplicated transactions and cross-reference tables). Generally the data structure in the silver layer remains the same as the raw layer

Gold layer (curated business-level tables) — data in the Gold layer is typically organized in consumption-ready “project/product/use case-specific” databases. The gold layer is for reporting and uses more de-normalized and read-optimized data models with fewer joins. The final layer of data transformations and data quality rules are applied here. For example, Business Intelligence(BI) reports and dashboards generally consume data directly from the gold layer.

Generally the data quality requirement become more and more stringent as the data flows from raw to bronze to silver and to gold as the gold layer directly serves the business. You should, by now, have a high-level understanding of what a medallion data design pattern is and why it is relevant for a data quality discussion. If you are keen to knowing more details about medallion architecture pattern, you can visit here.

From next part onwards, I will explain how to implement data quality checks in an end-to-end data pipeline by leveraging different data quality frameworks.

Let’s first start with Great Expectations.

Great Expectations Implementing Guidance

Great Expectations is a open-source Python library, which provides data quality management capabilities through data testing, documentation, and profiling. The three key components of Great Expectations are as follows:

Expectations — An expectation is a declarative statement about data. An expectation is specifically a data quality rule that a computer can compute, but a human can also understand, such as …… expect_column_values_to_not_be_null. Great Expectations comes with pre-defined expectations library, supporting various data sources including Pandas, Spark, MySQL, MSSQL, SQLite, PostgreSQL, Trino, Redshift, BigQuery and Snowflake. Another point worth mentioning is that Great Expectations allows users to create their own custom expectations.

Data Sources — Great Expectations provides a Datasource class to interact with your underlying data systems. The Datasource class provides an interface for a Data Connector and an Execution Engine to work together. The execution engine that is supported by Great Expectations include Pandas, Spark and SqlAlchemy. There are 3 types of data connectors for Great Expectations. The first is InferredAssetDataConnector, which infers data_asset_name by using a regex that takes advantage of patterns that exist in the filename or folder structure. The second is ConfiguredAssetDataConnector, which allows users to have the most fine-tuning, and requires an explicit listing of each Data Asset that you want to connect to. And, the third is RuntimeDataConnector, which is a special kind of data connector that enables you to use a RuntimeBatchRequest to provide a Batch’s data directly at runtime. The RuntimeBatchRequest can wrap an in-memory dataframe, a filepath, or a SQL query, and must include batch identifiers that uniquely identify the data, such as the run_id from a job scheduled by a workflow orchestrator.

Validations and Checkpoints — with Great Expectations, when you validate your data for the first time, a checkpoint will be created for you. Checkpoints are reuseable. Once you have created your checkpoint, configured it to your specifications, and specified any actions you want it to take based on the validation Results, all you will need to do in the future is tell the checkpoint to run.

Below is a programming model for Great Expectations:

The first step is to initiate a data context. You can think of a data context as a YAML file or a python dictionary, containing all the required configurations to run Great Expectations validation jobs. These configurations include data sources, metadata stores (expectations store, validations store, evaluation parameter store, checkpoint store, profile store), and a data docs site. With Great Expectations, you can initialize a new Data Context with the CLI, or you can instantiate a data context within your notebook or workflow tool without a YAML file. Data context is the core of Great Expectations. Without it, you cannot run any jobs.

The second step is to connect to the data, based on the data sources configurations you defined in the data context file. Your data can be in-memory Pandas or Spark data frame, or your data can come from a file system, a database (MySQL, PostgreSQL, MSSQL, Redshift, BigQuery and so on), or a cloud storage location (AWS S3, Google Cloud Storage (GCS), Azure Blob Storage).

The third step is to develop expectations and add the configured expectations to the expectation store defined in the data context file. There are multiple ways to create data quality expectations. For example you can create these expectations from domain knowledge. You can also use the onboarding data assistant to profile your data and automate the generation of an expectation suite, which you can then adjust to be suited for your specific needs.

The fourth (and final) step is to validate your data. Before running the data validation process, you will need to first create a checkpoint document. When configuring a checkpoint, you can add additional validation data, or set the checkpoint so that validation data must be specified at run time. You can add additional expectation suites, and you can add actions which the checkpoint will execute when it finishes validating data. The common actions include updating data docs, sending emails, posting slack notifications, or sending other custom notifications.

PyDeequ Implementation Guidance

Deequ is a library built on top of Apache Spark for defining “unit tests for data”, which measure data quality in large datasets. Deequ’s purpose is to “unit-test” data to find errors early, before the data gets fed to consuming systems or machine learning algorithms. Deequ works on tabular data, e.g., CSV files, database tables, logs, flattened json files, basically anything that you can fit into a Spark dataframe.

Here you will notice one of the major differences between Deequ and Great Expectations is that Deequ only works with Spark data frame, while Great Expectations support both the Spark and Pandas compute engines. This is not necessarily a bad thing. The reason is, Deequ is built on Apache Spark and is designed to work with very large datasets (think billions of rows) that typically live in a distributed file system or a data warehouse.

Deequ has a Python interface called PyDeequ, which is written to support usage of Deequ in Python. Below are the key components of PyDeequ:

Analyzer and Profile — Both the analyzer and profile modules help to explore big data in large scale, to calculate the metrics required to develop data quality constraints. For example, you can use analyzer to compute the approximate count distinctness of a column, the approximate quantile of a column, and the value of the computed completeness. Analyzer can also be used to do compliance checks, measuring the fraction of rows that complies with the given

column constraint.Constraint Suggestion — PyDeequ provides pre-defined rules and constraints to be suggested on your data. These pre-defined rules include suggesting a categorical range constraint for a column, a NOT NULL constraint, a data type constraint and a unique constraint.

Constraint Verification — Once the constraints are defined, you can run the verification tests on new data. The verifications results, success metrics as well as check status can be saved either as a data frame or as a JSON file.

Metrics Repository — PyDeequ allows users to persist the metrics and verification results computed on spark data frames in JSON format in a central metrics repository. PyDeequ supports two types of metrics repository, one is InMemoryMetricsRepository and the other is FileSystemMetricsRepository. FileSystemMetricsRepository also supports HDFS and S3. Each set of metrics computed needs be indexed by a ResultKey, which contains a timestamp and supports arbitrary tags in the form of key-value pairs. With a metrics repository in place, users can later retrieve the metrics from the repository in different ways.

PyDeequ is very easy to start and supports Spark as the compute engine, however Great Expectations is a more comprehensive data quality management framework.

Delta Live Table Implementation Guidance

Delta Live Table (DLT) is Databrick’s in-house ETL framework, which makes it easy to build and manage reliable data pipelines that deliver high-quality data on Delta Lake. One of the key focuses of DLT is data quality management. It provides mechanisms to prevent bad data from flowing into tables through validation and integrity checks and avoid data quality errors with predefined error policies. It also provides a dashboard to monitor data quality trends over time to get insight into how your data is evolving and where changes may be necessary.

Similar with Great Expectations, you can use expectations to define data quality constraints on your dataset. An expectation consists of a description, an invariant, and an action to take when a record fails the invariant. DLT provides 3 types of policies when records violate the expectations pre-defined expectations:

expect — you can use the expect operator when you want to keep records that violate the expectation. Records that violate the expectation are added to the target dataset along with valid records.

expect or drop — you can use the expect or drop operator to prevent the processing of invalid records. Records that violate the expectation are dropped from the target dataset.

expect or fail — when invalid records are unacceptable, use the expect or fail operator to halt execution immediately when a record fails validation. If the operation is a table update, the system atomically rolls back the transaction.

Summary

Nowadays, more and more business applications and operating systems work with data, with implicit assumptions about that data, for example, some columns must have certain data types and some columns should not contain any missing values. If these assumptions are violated, the applications might crash, the performance of a ML-driven system may deteriorate, or, the business decisions based on data might be biased or even wrong.

Therefore, regardless which data quality framework you eventually select (or you can even build your own data management solutions if it suits the needs of your organization), the most important thing, is to build data reliability and integrity checks into your data pipelines to make sure you provide high-quality data to your data consumers.